DeepFaceLab 2020/DFL2 版本记录

2021年1月4日

SAEHD: GAN升级,使得预览图减少了生硬感,变得更加干净!

SAEHD: GAN is improved. Now produces less artifacts and more cleaner preview.

GAN的选项

All GAN options:

GAN强度

GAN power

强迫神经网络学习脸部的小细节。

Forces the neural network to learn small details of the face.

当开始lr_dropout,关闭random_warp训练足够之后,启用这个参数,开了之后就别关了!

Enable it only when the face is trained enough with lr_dropout(on) and random_warp(off), and don’t disable.

数字越高,会越生硬。比较常用的值为0.1

The higher the value, the higher the chances of artifacts. Typical fine value is 0.1

GAN Patch大小

GAN patch size (3-640)

数字越到,质量越好,同时也需要越多的显存

The higher patch size, the higher the quality, the more VRAM is required.

即使在最低设置下,您也可以获得更锐利的边缘。

You can get sharper edges even at the lowest setting.

典型值为 8

Typical fine value is resolution / 8.

GAN 网络维度

GAN dimensions (4-64)

GAN网络的尺寸

The dimensions of the GAN network.

尺寸越高,对VRAM的要求就越高。

The higher dimensions, the more VRAM is required.

即使在最低设置下,您也可以获得更锐利的边缘。

You can get sharper edges even at the lowest setting.

典型值为 16

Typical fine value is 16.

不同设置的比较图

Comparison of different settings: https://i.imgur.com/6IgvsLN.png

2020年12月22日

缩短训练数据的加载时间

The load time of training data has been reduced significantly.

2020年12月20日

SAEHD:

现在lr_dropout和AdaBelief可以同时使用了。

lr_dropout now can be used with AdaBelief

眼部优先被替换为眼部和嘴部优先

Eyes priority is replaced with Eyes and mouth priority

主要是为了修复异形眼和眼神乱飘的问题,同时也能让牙齿的细节更高。

Helps to fix eye problems during training like “alien eyes” and wrong eyes direction.

同时也能让牙齿的细节更高

Also makes the detail of the teeth higher.

新模型的默认值改咯

New default values with new model:

没啥好翻译

Archi : ‘liae-ud’

没啥好翻译

AdaBelief : enabled

2020年12月16日 (提速)

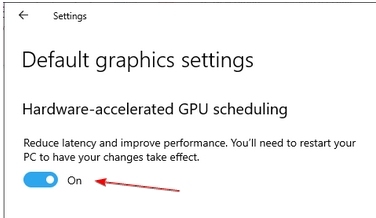

Windows 10 比较新的版本记得修改图形设置,启用GPU加速。

针对所有英伟达显卡的集成版

Now single build for all video cards.

深度学习框架TensorFlow升级到了2.4.0,同步升级 CUDA 11.2,CuDNN 8.0.5

Upgraded to Tensorflow 2.4.0, CUDA 11.2, CuDNN 8.0.5.

你不需要安装任何依赖(除了升级显卡驱动)

You don’t need to install anything.

2020年12月11日

深度学习框架tf更新到2.4.0rc4

Upgrade to Tensorflow 2.4.0rc4

支持英伟达3000系列

Now support RTX 3000 series.

算力只有3.0显卡将不再支持。

Videocards with Compute Capability 3.0 are no longer supported.

不支持AVX的CPU也不再支持

CPUs without AVX are no longer supported.

==========3000系列分割线=============

2020年8月2日

SAEHD: now random_warp is disabled for pretraining mode by default

SAEHD: 针对预训练,模型选项random_warp默认为不启用。

Merger: fix load time of xseg if it has no model files

合成:修复没有模型文件是加载Xseg时间的问题。

2020年7月18日

Fixes

修正

SAEHD: write_preview_history now works faster

现在保存历史图片变快了

The frequency at which the preview is saved now depends on the resolution.

保存的频率取决于模型的像素

For example 64×64 – every 10 iters. 448×448 – every 70 iters.

比如64×64的像素,没10个迭代保存一次,448×448 没70个迭代保存一次。

Merger: added option “Number of workers?”

合成:添加了线程数量的选项。

Specify the number of threads to process.

可以指定线程的数量

A low value may affect performance.

值太低可能会影响性能

A high value may result in memory error.

值太高可能会导致内存错误。

The value may not be greater than CPU cores.

这个值不应该大于cpu内核数量。

2020年7月17日

SAEHD:

Pretrain dataset is replaced with high quality FFHQ dataset.

预训练集换成了高质量的FFHQ数据集。

Changed help for “Learning rate dropout” option:

改变了“Learning rate dropout” 的帮助信息

When the face is trained enough, you can enable this option to get extra sharpness and reduce subpixel shake for less amount of iterations.

当训练足够时,你可以启用这个选项获得额外的清晰度和减少子像素的抖动,从而减少迭代次数。

Enabled it before “disable random warp” and before GAN. n disabled. y enabled

在禁用random warp 和GAN之前启用它, N 禁用,Y 启用

cpu enabled on CPU. This allows not to use extra VRAM, sacrificing 20% time of iteration.

CPU则请用CPU,这样可以不适用额外的VRAM,从而减少20%的迭代时间。

Changed help for GAN option:

修改 GAN选项的帮助信息

Train the network in Generative Adversarial manner.

用GAN训练网络

Forces the neural network to learn small details of the face.

强制神经网络学习面部更小的细节。

Enable it only when the face is trained enough and don’t disable.

只有当对脸部训练重复之后启用,并且不再关闭。

Typical value is 0.1

典型值为0.1

improved GAN. Now it produces better skin detail, less patterned aggressive artifacts, works faster.

改进GAN,现在可以产生更好的皮肤细节,减少图案化的伪影,并且可以更快的工作。

2020年6月27日

Extractor:

提取器

Extraction now can be continued, but you must specify the same options again.

提取可以中断和继续了。但是你必须再次指定相同的选项。

added ‘Max number of faces from image’ option.

添加了“图片中最大人脸数量” 选项

If you extract a src faceset that has frames with a large number of faces,

如果你提取的某个帧中有非常多的人脸

it is advisable to set max faces to 3 to speed up extraction.

建议将最大值设置3,可以加快提取速度。

0 – unlimited

0 表示无限制

added ‘Image size’ option.

添加图片大小选项

The higher image size, the worse face-enhancer works.

图片越大,脸部增强效果越长。

Use higher than 512 value only if the source image is sharp enough and the face does not need to be enhanced.

仅当原图像足够清晰,且不需要增强,才使用高于512的值

added ‘Jpeg quality’ option in range 1-100. The higher jpeg quality the larger the output file size

添加JPEG质量选项,取值范围为1-100,JPEG质量越高,输出文件越大。

Sorter: improved sort by blur and by best faces.

排序:改进了模糊排序和最佳排序。

2020年6月19日

SAEHD:

Maximum resolution is increased to 640.

最高像素调整到640

‘hd’ archi is removed. ‘hd’ was experimental archi created to remove subpixel shake, but ‘lr_dropout’ and ‘disable random warping’ do that better.

HD结构被移除, HD是一个用来解决亚像素抖动的试验性功能,但是‘lr_dropout’和‘disable random warping’ 做得更好

‘uhd’ is renamed to ‘-u’

uhd 已经重命名为-u

dfuhd and liaeuhd will be automatically renamed to df-u and liae-u in existing models.

对于已经存在的模型,软件会自动将dfuhd 和 liaeuhd 重命名为df-u和liae-u

Added new experimental archi (key -d) which doubles the resolution using the same computation cost.

添加一个试验性的结构。同样的配置,可以跑两倍的像素。

It is mean same configs will be x2 faster, or for example you can set 448 resolution and it will train as 224.

这意味着同样的配置,会快两倍,或者原先只能跑224,现在可以跑448

Strongly recommended not to train from scratch and use pretrained models.

墙裂推荐使用预训练模型

New archi naming:

新结构命名:

‘df’ keeps more identity-preserved face.

‘liae’ can fix overly different face shapes.

‘-u’ increased likeness of the face.

‘-d’ (experimental) doubling the resolution using the same computation cost

‘-d ‘(试验性) 相同的配置提升一倍的像素

Examples: df, liae, df-d, df-ud, liae-ud, …

Not the best example of 448 df-ud trained on 11GB:

11GB 训练448的演示,这还不是最佳效果。

Improved GAN training (GAN_power option). It was used for dst model, but actually we don’t need it for dst.

改进了GAN训练(GAN_power选项)。 它之前是用于dst模型,但实际上我们不需要用在dst。

Instead, a second src GAN model with x2 smaller patch size was added, so the overall quality for hi-res models should be higher.

取而代之的是,添加了第二个1/2大小的src GAN模型,因此高分辨率模型的整体质量应该更高。

Added option ‘Uniform yaw distribution of samples (y/n)’:

添加选项Uniform yaw distribution of samples

Helps to fix blurry side faces due to small amount of them in the faceset.

由于侧面中的侧面较少,有助于修复侧面模糊。

Quick96:

Now based on df-ud archi and 20% faster.

默认结构改为df-ud ,速度提升20%

XSeg trainer:

Improved sample generator.

提升实例生成

Now it randomly adds the background from other samples.

现在会随机添加从其他样例中的背景

Result is reduced chance of random mask noise on the area outside the face.

可以降低脸外部的随机噪声

Now you can specify ‘batch_size’ in range 2-16.

现在可以指定bs,取值范围2-16

Reduced size of samples with applied XSeg mask. Thus size of packed samples with applied xseg mask is also reduced.

应用的Xseg遮罩样例变小,所以打包后的素材文件也会变小。

2020年4月15日

XSegEditor: added view lock at the center by holding shift in drawing mode.

XSegEditor:在绘图模式下安装shift键可以在中心添加视图锁定

Merger: color transfer “sot-m”: speed optimization for 5-10%

合并:颜色转移“sot-m”:速度优化5-10%

Fix minor bug in sample loader

修复示例加载程序中的小错误

2020年4月14日

Merger: optimizations

合并:优化

color transfer ‘sot-m’ : reduced color flickering, but consuming x5 more time to process

颜色转换“sot-m”:减少了颜色闪烁,但处理时间增加了5倍

added mask mode ‘learned-prd + learned-dst’ – produces largest area of both dst and predicted masks

添加的遮罩模式“learn prd+learn dst”-生成dst和预测遮罩的最大面积。

XSegEditor : polygon is now transparent while editing

XSegEditor:编辑时多边形现在是透明的

New example data_dst.mp4 video

新的dst视频。

New official mini tutorial https://www.youtube.com/watch?v=1smpMsfC3ls

新的官方教程

2020年4月6日

Fixes for 16+ cpu cores and large facesets.

修复了16+cpu问题,以及大数据集的问题。

added 5.XSeg) data_dst/data_src mask for XSeg trainer – remove.bat

添加脚本

removes labeled xseg polygons from the extracted frames

作用是从已经提取的帧中溢出遮罩信息。

2020年4月5日

Fixed bug with input dialog in Windows 10

修复Window10下面输入对话的问题。

Fixed running XSegEditor when directory path contains spaces

修复了当目录路径包含空格时运行XSegEditor的问题

SAEHD: ‘Face style power’ and ‘Background style power’ are now available for whole_face

SAEHD:全脸类型也可以使用 ‘Face style power’ 和 ‘Background style power’ 这两个参数。

New help messages for these options.

正对如下选项添加了新的提示信息

XSegEditor: added button ‘view trained XSeg mask’, so you can see which frames should be masked to improve mask quality.

XSegEditor:添加view trained XSeg mask按钮,因此,您可以看到哪些帧应该被遮罩以提高遮罩质量。

Merger:

合成

added ‘raw-predict’ mode. Outputs raw predicted square image from the neural network.

添加raw-predict模式,从神经网络输出预测的正方形图像。

mask-mode ‘learned’ replaced with 3 new modes:

遮罩模型中的Learned被一下3个新的模型所替代了:

‘learned-prd’ – smooth learned mask of the predicted face

‘learned-prd’ – 预测人脸的遮罩

‘learned-dst’ – smooth learned mask of DST face

‘learned-dst’ – 目标人脸的遮罩

‘learned-prd*learned-dst’ – smallest area of both (default)

预测区域和dst区域两者的交集。(默认)

Added new face type : head

添加新的脸型:头

Now you can replace the head.

现在可以换头了。

Example: https://www.youtube.com/watch?v=xr5FHd0AdlQ

示例 : https://www.youtube.com/watch?v=xr5FHd0AdlQ

Requirements:

需要:

Post processing skill in Adobe After Effects or Davinci Resolve.

AE和达芬奇等后期处理技能

Usage:

使用:

Find suitable dst footage with the monotonous background behind head

找到合适的dst镜头,背景比较单一那种。

Use “extract head” script

使用“extract head”脚本

Gather rich src headset from only one scene (same color and haircut)

只从一个场景收集丰富的src头像集(需要相同颜色和发型)

Mask whole head for src and dst using XSeg editor

使用XSeg编辑器为Src和Dst标注整个头的遮罩。

Train XSeg

训练Xseg

Apply trained XSeg mask for src and dst headsets

为Src和dst头像应用已经训练好的Xseg遮罩。

Train SAEHD using ‘head’ face_type as regular deepfake model with DF archi. You can use pretrained model for head. Minimum recommended resolution for head is 224.

训练SAEHD模型,脸的类型使用“head” ,结构采用DF。你可以使用Head的预训练模型。建议头部模型的分辨率不低于224。

Extract multiple tracks, using Merger:

使用合成功能合成多个轨道。

a. Raw-rgb

b. XSeg-prd mask

c. XSeg-dst mask

Using AAE or DavinciResolve, do:

使用AE或者达芬奇处理:

a. Hide source head using XSeg-prd mask: content-aware-fill, clone-stamp, background retraction, or other technique

使用Xseg-prd遮罩隐藏源头像:内容感知填充、克隆标记、背景收回或其他技术

b. Overlay new head using XSeg-dst mask

使用XSeg dst蒙版覆盖新的头像

Warning: Head faceset can be used for whole_face or less types of training only with XSeg masking.

警告:人头数据集可用于整脸。或者….这句我没懂….

2020年3月30日

New script:

新脚本

5.XSeg) data_dst/src mask for XSeg trainer – fetch.bat

Copies faces containing XSeg polygons to aligned_xseg\ dir.

拷贝包含Xseg标注的头像到aligned_xseg目录

Useful only if you want to collect labeled faces and reuse them in other fakes.

如果你想要收集这些已经标注的人脸并且应用于其他视频的时候,这脚本会非常有用。

Now you can use trained XSeg mask in the SAEHD training process.

现在已经训练好的Xseg遮罩可以体现在SAEHD训练的过程中。

It’s mean default ‘full_face’ mask obtained from landmarks will be replaced with the mask obtained from the trained XSeg model.

这意味着,以前通过人脸标识获得的全脸遮罩将被Xseg遮罩所替代。

use:

使用方法:

5.XSeg.optional) trained mask for data_dst/data_src – apply.bat

应用脚本

5.XSeg.optional) trained mask for data_dst/data_src – remove.bat

移出脚本

Normally you don’t need it. You can use it, if you want to use ‘face_style’ and ‘bg_style’ with obstructions.

通常情况下你不需要使用它,如果你想要在遮挡中使用Face_style和bg_style,你也可以使用。

XSeg trainer : now you can choose type of face

Xseg训练:现在可以选择脸型了

XSeg trainer : now you can restart training in “override settings”

Xseg训练:现在你可以通过重载设置重新训练。

Merger: XSeg-* modes now can be used with all types of faces.

合成:XSeg-*模型可以应用于所有脸型(值整脸,全脸,半脸这些脸型)

Therefore old MaskEditor, FANSEG models, and FAN-x modes have been removed,

之前的MaskEditor(遮罩编辑器),Fanseg模型,Fan-X模式都已经被移出。

because the new XSeg solution is better, simpler and more convenient, which costs only 1 hour of manual masking for regular deepfake.

因为对于普通的换脸来说,新的XSeg解决方案更好、更简单、更方便。只需要1个小时的时间做手动的遮罩。

2020年3月25日

SAEHD: added ‘dfuhd’ and ‘liaeuhd’ archi

SAEHD: 添加了dfuhd和liaeuhd结构

uhd version is lighter than ‘HD’ but heavier than regular version.

uhd版本比HD更加轻量级,比常规版本更加重量级。

liaeuhd provides more “src-like” result

liaeuhd提供了更加像src的结果

comparison:

比较

added new XSegEditor !

添加新的Xseg编辑器。

here new whole_face + XSeg workflow:

以下为整脸和Xseg的工作流程。

with XSeg model you can train your own mask segmentator for dst(and/or src) faces

使用Xseg模型,你可以自己为Dst脸部训练遮罩分割器

that will be used by the merger for whole_face.

合成的时候会应用。

Instead of using a pretrained segmentator model (which does not exist),

替代了使用预训练遮罩模型的方式(不曾存在过的那种方式,此处是幽默?)

you control which part of faces should be masked.

你可以控制哪一部分应该被遮挡(当然,工作量也大了不少。)

new scripts:

新的脚本。

5.XSeg) data_dst edit masks.bat

5.XSeg) data_src edit masks.bat

5.XSeg) train.bat

Usage:

使用:

unpack dst faceset if packed

数据集如果打包了,请先解压。

run 5.XSeg) data_dst edit masks.bat

运行脚本

Read tooltips on the buttons (en/ru/zn languages are supported)

阅读提示(支持英文,俄语,中文)

mask the face using include or exclude polygon mode.

使用包含和排除的方式标注脸部。

repeat for 50/100 faces,

重复 50到100张脸

!!! you don’t need to mask every frame of dst

无需标注所有帧

only frames where the face is different significantly,

只要关注那些有差异的脸。

for example:

举例

closed eyes

闭眼

changed head direction

改变脸的方向

changed light

改变灯光

the more various faces you mask, the more quality you will get

标注越多,效果越好。

Start masking from the upper left area and follow the clockwise direction.

Keep the same logic of masking for all frames, for example:

the same approximated jaw line of the side faces, where the jaw is not visible

the same hair line

Mask the obstructions using exclude polygon mode.

run XSeg) train.bat

运行脚本

train the model

训练模型

Check the faces of ‘XSeg dst faces’ preview.

查看预览图

if some faces have wrong or glitchy mask, then repeat steps:

如果发现问题,继续以下步骤

run edit

find these glitchy faces and mask them

train further or restart training from scratch

Restart training of XSeg model is only possible by deleting all ‘model\XSeg_*’ files.

只有删除了model\XSeg-*的文件才能重新训练。

If you want to get the mask of the predicted face (XSeg-prd mode) in merger,

如果需要使用predicted face遮罩

you should repeat the same steps for src faceset.

你需要对src数据集重复一样的步骤。

New mask modes available in merger for whole_face:

这个遮罩在合成的时候被应用,仅针对整脸。具体选项如下:

XSeg-prd – XSeg mask of predicted face -> faces from src faceset should be labeled

XSeg-dst – XSeg mask of dst face -> faces from dst faceset should be labeled

XSeg-prd*XSeg-dst – the smallest area of both

if workspace\model folder contains trained XSeg model, then merger will use it,

如果model目录下包含Xseg模型,则被应用。

otherwise you will get transparent mask by using XSeg-* modes.

否则,您将通过使用XSeg-*模式获得透明掩码。

Some screenshots:

一些截图

XSegEditor:

2020年3月15日

这个版本主要是加入了Xseg模型,具体操作参考:https://www.deepfaker.xyz/?p=1648

global fixes

全局修复

SAEHD: removed option learn_mask, it is now enabled by default

SAEHD: 移除learn_mask,变为默认启用。

removed liaech arhi

移除 Liaech结构

removed support of extracted(aligned) PNG faces. Use old builds to convert from PNG to JPG.

移除对PNG图片的支持。可以使用老脚本把PNG转成JPG

added XSeg model.

添加XSeg模型

with XSeg model you can train your own mask segmentator of dst(and src) faces

使用Xseg模型,你可以训练你自己的遮罩

that will be used in merger for whole_face.

这个会在合成的时候被应用

Instead of using a pretrained model (which does not exist),

相比于使用一个预训练的模型(不存在的!)

you control which part of faces should be masked.

现在,你可以直接控制哪一部应该是遮罩。

Workflow is not easy, but at the moment it is the best solution

使用过程并不简单。但是当前是最好的解决方案。

for obtaining the best quality of whole_face’s deepfakes using minimum effort

以最小的努力获得整个最佳的整脸替换效果。

without rotoscoping in AfterEffects.

无需AE配合

new scripts:

新脚本

XSeg) data_dst edit.bat

XSeg) data_dst merge.bat

XSeg) data_dst split.bat

XSeg) data_src edit.bat

XSeg) data_src merge.bat

XSeg) data_src split.bat

XSeg) train.bat

Usage:

使用方法:

unpack dst faceset if packed

如果打包了请先解压

run XSeg) data_dst split.bat

运行脚本

this scripts extracts (previously saved) .json data from jpg faces to use in label tool.

这个脚本会玻璃出一个.json 文件。

run XSeg) data_dst edit.bat

运行脚本

new tool ‘labelme’ is used

此时,新的工具labelme将被应用。

use polygon (CTRL-N) to mask the face

Ctrl+n 新建遮罩

name polygon “1” (one symbol) as include polygon

1代表包括

name polygon “0” (one symbol) as exclude polygon

0代表排除

‘exclude polygons’ will be applied after all ‘include polygons’

排除将在包括之后应用

Hot keys:

热键:

ctrl-N create polygon

创建

ctrl-J edit polygon

编辑

A/D navigate between frames

上一个下一个

ctrl + mousewheel image zoom

Ctrl+鼠标滚轮实现缩放。

mousewheel vertical scroll

滚轮可以纵向移动

alt+mousewheel horizontal scroll

alt+滚轮可以横向移动。

repeat for 10/50/100 faces,

重复10/50/100张脸。

you don’t need to mask every frame of dst,

你不需要标注所有的头像

only frames where the face is different significantly,

仅仅处理那些明显不同的即可。

for example:

举个栗子:

closed eyes

闭上眼

changed head direction

改编头部方向

changed light

改变了灯光。

the more various faces you mask, the more quality you will get

你处理的图片越多,获得的效果越好。

Start masking from the upper left area and follow the clockwise direction.

从左上方开始,沿着顺时针方向

Keep the same logic of masking for all frames, for example:

对所有帧都使用相同的屏蔽逻辑,例如:

the same approximated jaw line of the side faces, where the jaw is not visible

the same hair line

Mask the obstructions using polygon with name “0”.

run XSeg) data_dst merge.bat

运行脚本

this script merges .json data of polygons into jpg faces,

这个脚本吧.json文件插入到图片中。

therefore faceset can be sorted or packed as usual.

这样就可以对图片进行排序和打包了。

run XSeg) train.bat

运行脚本

train the model

训练模型

Check the faces of ‘XSeg dst faces’ preview.

查看Xseg预览图

if some faces have wrong or glitchy mask, then repeat steps:

如果有些脸是错误,或者有点小问题,那么重复下面的步骤。

split

剥离

run edit

编辑

find these glitchy faces and mask them

发现有问题的人脸,添加遮罩

merge

合成

train further or restart training from scratch

继续训练或者重新开始训练

Restart training of XSeg model is only possible by deleting all ‘model\XSeg_*’ files.

只有通过删除model\Xseg开头的文件,才能重新训练

If you want to get the mask of the predicted face in merger,

如果你想在合并中得到预期的遮罩,

you should repeat the same steps for src faceset.

您应该对src faceset使用同样的步骤。

New mask modes available in merger for whole_face:

整脸模型在合成的时候会出现想的遮罩参数。

XSeg-prd – XSeg mask of predicted face -> faces from src faceset should be labeled

XSeg-dst – XSeg mask of dst face -> faces from dst faceset should be labeled

XSeg-prd*XSeg-dst – the smallest area of both

if workspace\model folder contains trained XSeg model, then merger will use it,

如果model目录下有XSeg模型。合成的时候就会使用。

otherwise you will get transparent mask by using XSeg-* modes.

否者你会通过Xseg-*模型获得透明遮罩。

Some screenshots:

一些截图。

label tool: https://i.imgur.com/aY6QGw1.jpg

trainer : https://i.imgur.com/NM1Kn3s.jpg

merger : https://i.imgur.com/glUzFQ8.jpg

example of the fake using 13 segmented dst faces

: https://i.imgur.com/wmvyizU.gifv

2020年2月28日

Extractor:

提取

image size for all faces is now 512

提取的图片尺寸从256提升到512

fix RuntimeWarning during the extraction process

修复提取过程中出现RuntimeWarning的问题。

SAEHD:

max resolution is now 512

模型参数中,最高像素改成512(32G V100同样表示玩不起)

fix hd arhitectures. Some decoder’s weights haven’t trained before.

修复HD架构,之前一些解码器权重没有被训练到。

new optimized training:

新的优化训练

for every <batch_size*16> samples,

对于每个<batch_size*16>样本,

model collects <batch_size> samples with the highest error and learns them again

模型收集误差比较大的样例进行重新学习。

therefore hard samples will be trained more often

因此,比较难的样本被训的更狠。

‘models_opt_on_gpu’ option is now available for multigpus (before only for 1 gpu)

“models_opt_on_gpu”选项现在可用于多显卡(以前仅用单显卡)

fix ‘autobackup_hour’

修复“自动备份时间”

2020年2月23日

SAEHD: pretrain option is now available for whole_face type

SAEHD: 预训练支持“整脸”

fix sort by abs difference

修复abs差异排序

fix sort by yaw/pitch/best for whole_face’s

修复整脸的yaw/pitch/best排序。

2020年2月21日

Trainer: decreased time of initialization

训练:减少初始化时间

Merger: fixed some color flickering in overlay+rct mode

合成:修复overlay+rct模式下的一些闪缩的问题。

SAEHD:

added option Eyes priority (y/n)

添加眼部优先选项

Helps to fix eye problems during training like “alien eyes”

有助于在训练过程中解决“异形眼”

and wrong eyes direction ( especially on HD architectures )

和眼神不对的问题(尤其是在HD架构上)

by forcing the neural network to train eyes with higher priority.

通过强制神经网络以更高的优先级来训练眼睛。

before/after https://i.imgur.com/YQHOuSR.jpg

之前/之后

added experimental face type ‘whole_face’

添加试验性脸型“整脸”。

Basic usage instruction: https://i.imgur.com/w7LkId2.jpg

基本用法说明:

‘whole_face’ requires skill in Adobe After Effects.

整脸需要 AE技能

For using whole_face you have to extract whole_face’s by using

使用整脸你必须提取整脸图片,通过以下两个脚本。

4) data_src extract whole_face

and

5) data_dst extract whole_face

Images will be extracted in 512 resolution, so they can be used for regular full_face’s and half_face’s.

图片会按512像素进行提取。他们同样也适用于常规的全脸和半脸。

‘whole_face’ covers whole area of face include forehead in training square,

整脸涵盖脸部的整个区域包含额头。

but training mask is still ‘full_face’

但是训练遮罩依旧是全脸

therefore it requires manual final masking and composing in Adobe After Effects.

因此需要你通过AE手动遮罩和合成。

added option ‘masked_training’

添加遮罩训练

This option is available only for ‘whole_face’ type.

这个选项仅适用于整脸

Default is ON.

默认开启

Masked training clips training area to full_face mask,

遮罩训练可以把训练区域剪辑到全脸遮罩。

thus network will train the faces properly.

这样网络就可以正确训练脸部

When the face is trained enough, disable this option to train all area of the frame.

当训练足够多的时候,关闭这个选项,让网络去训练所有区域。

Merge with ‘raw-rgb’ mode, then use Adobe After Effects to manually mask, tune color, and compose whole face include forehead.

使用Raw-rgb模式合成,然后使用AE手动遮罩调整颜色,然后合成,这样额头也能被换掉。

2020年2月3日 (DFL2.0)

“Enable autobackup” option is replaced by

启动备份选项被替换为

“Autobackup every N hour” 0..24 (default 0 disabled), Autobackup model files with preview every N hour

几个小时备份一次。

Merger:

合成

‘show alpha mask’ now on ‘V’ button

show alpha mask 的快捷键改成V

‘super resolution mode’ is replaced by

超级分辨率super resolution mode 参数被替换为

‘super resolution power’ (0..100) which can be modified via ‘T’ ‘G’ buttons

超级分辨率supersuper resolution power, 取值范围为0~100,可以通过快捷键T和G进行修改。

default erode/blur values are 0.

默认的erode/blur被设置为0

new multiple faces detection log: https://i.imgur.com/0XObjsB.jpg

新的多脸检测日志: https://i.imgur.com/0XObjsB.jpg

now uses all available CPU cores ( before max 6 )

使用所有的CPU内核,之前最多6个CPU

so the more processors, the faster the process will be.

因此,处理器越多,速度越快

2020年2月1日

Merger:

合成

increased speed

提升速度

improved quality

提升质量

SAEHD: default archi is now ‘df’

SAEHD:默认结构改回df

2020年1月30日

removed use_float16 option

移除use_float16 选项

fix MultiGPU training

修复多卡训练的问题。

2020年1月29日

MultiGPU training:

多卡训练:

fixed CUDNN_STREAM errors.

修复Cudnn的错误

speed is significantly increased.

速度大大提升

Trainer: added key ‘b’ : creates a backup even if the autobackup is disabled.

训练:添加了B键,即使禁用了自动备份,也会创建备份。

2020年1月28日

optimized face sample generator, CPU load is significantly reduced

优化人类样本生成器,CPU复制显著降低

fix of update preview for history after disabling the pretrain mode

修复结束预训练后历史曲线的显示问题。

SAEHD:

added new option

添加新的选项

GAN power 0.0 .. 10.0

GAN强度

Train the network in Generative Adversarial manner.

用生成对抗式网络来训练神经网络。

Forces the neural network to learn small details of the face.

强制神经网络学习面部小细节。

You can enable/disable this option at any time,

你可以在任意时间内启用或关闭这个选项。

but better to enable it when the network is trained enough.

但最好的方法是,模型已经训练到无法提升了再启用。

Typical value is 1.0

典型值为1.0

GAN power with pretrain mode will not work.

预训练模型无法启用这个参数。

Example of enabling GAN on 81k iters +5k iters

训练81000次后启用GAN参数,然后在训练5000次的效果

dfhd: default Decoder dimensions are now 48

dfhd: 默认编码器维度是48

the preview for 256 res is now correctly displayed

现在可以正确显示像素为256的预览图

fixed model naming/renaming/removing

修复模型 命名/重命名/移除功能

Improvements for those involved in post-processing in AfterEffects:

为AE后期人员做了功能提升:

Codec is reverted back to x264 in order to properly use in AfterEffects and video players.

视频编码改回x264,以便在AE和视频播放器中正确使用

Merger now always outputs the mask to workspace\data_dst\merged_mask

合成的时候会输出遮罩图。

removed raw modes except raw-rgb

移除了原始模式,仅保存Raw-rgb

raw-rgb mode now outputs selected face mask_mode (before square mask)

Raw-rgb 模式现在输出选定人脸遮罩模式(之前为方形遮罩)

‘export alpha mask’ button is replaced by ‘show alpha mask’.

导出遮罩 export alpha mask 按钮被替换为 show alpha mask

You can view the alpha mask without recompute the frames.

无需重新计算帧就可以查看α蒙版

8) ‘merged *.bat’ now also output ‘result_mask.’ video file.

merged *.bat 现在也会输出result_mask视频文件

8) ‘merged lossless’ now uses x264 lossless codec (before PNG codec)

merged lossless 现在使用x264无损编码器(之前为PNG 编码器)

result_mask video file is always lossless.

result_mask视频文件始终是无损文件

Thus you can use result_mask video file as mask layer in the AfterEffects.

因此,你可以在AE中将result_mask使用文件作为遮罩层。

2020年1月25日

Upgraded to TF version 1.13.2

TF版本升级到1.13.2

Removed the wait at first launch for most graphics cards.

对大多数显卡而言,首次加载无需得等很久。

Increased speed of training by 10-20%, but you have to retrain all models from scratch.

训练速度提高10%~20%,但是你必须从头开始训练所有模型

SAEHD:

added option ‘use float16’

添加了use float16选项

Experimental option. Reduces the model size by half.

实验选项,模型尺寸将减半!

Increases the speed of training.

提升训练速度

Decreases the accuracy of the model.

降低模型的准确性

The model may collapse or not train.

模型可能会崩溃或者无法训练

Model may not learn the mask in large resolutions.

高像素的遮罩可能无法学习

You enable/disable this option at any time.

你可以随时启用或者关闭

true_face_training option is replaced by

true_face_training 选项被替换为

“True face power”. 0.0000 .. 1.0

Experimental option. Discriminates the result face to be more like the src face. Higher value – stronger discrimination.

实验选项,结果更像src,价值更高,辨别力更强!

Comparison – https://i.imgur.com/czScS9q.png

比较图:https://i.imgur.com/czScS9q.png

2019年1月23日

SAEHD: fixed clipgrad option

2019年1月22日

BREAKING CHANGES !!!

重大变更!!!

Getting rid of the weakest link – AMD cards support.

摆脱薄弱环节,不在支持AMD显卡

All neural network codebase transferred to pure low-level TensorFlow backend, therefore

因此,神经网络后端全部使用TensorFlow

removed AMD/Intel cards support, now DFL works only on NVIDIA cards or CPU.

因此删除AMD/Inter显卡支持,现在DFL仅支持NVIDIA卡或者CPU

old DFL marked as 1.0 still available for download, but it will no longer be supported.

老版本的DFL依旧支持下载,但是不在支持!

global code refactoring, fixes and optimizations

全局代码重构,修复和优化

Extractor:

提取

now you can choose on which GPUs (or CPU) to process

现在你可以选择GPUS或者CPU

improved stability for < 4GB GPUs

改进了4G以下显卡的稳定性

increased speed of multi gpu initializing

提升GPU初始化的速度。

now works in one pass (except manual mode)

现在并成了一个步骤

so you won’t lose the processed data if something goes wrong before the old 3rd pass

因此,如果中间出现了问题,并不会丢失所有进度。

Faceset enhancer:

数据集增强

now you can choose on which GPUs (or CPU) to process

现在你可以选择GPU或者CPU

Trainer:

训练

now you can choose on which GPUs (or CPU) to train the model.

你可以选择在GPU或者CPU上训练模型

Multi-gpu training is now supported.

支持多卡训练

Select identical cards, otherwise fast GPU will wait slow GPU every iteration.

选择相同的显卡,否则快速GPU加载每次迭代中等待较慢的显卡

now remembers the previous option input as default with the current workspace/model/ folder.

现在会记住当前工作空间,模型,文件夹这些配置项

the number of sample generators now matches the available number of processors

样本生成器和处理器数量匹配

saved models now have names instead of GPU indexes.

保存模型的时候用名字代替了GPU序号

Therefore you can switch GPUs for every saved model.

你可以为每个模型切换GPU

Trainer offers to choose latest saved model by default.

训练的时候默认使用最后的配置

You can rename or delete any model using the dialog.

你可以通过对话的方式重命名或者删除模型

models now save the optimizer weights in the model folder to continue training properly

模型现在将优化器权重保存在模型文件夹中,以继续正确训练。

removed all models except SAEHD, Quick96

移出所有模型,仅保留SAEHD,Quick96.

trained model files from DFL 1.0 cannot be reused

DFL1.0中训练好的模型文件不能重复使用。

AVATAR model is also removed.

阿凡达模型同样被移出

How to create AVATAR like in this video? https://www.youtube.com/watch?v=4GdWD0yxvqw

如何创建阿凡达请看视频

1) capture yourself with your own speech repeating same head direction as celeb in target video

2) train regular deepfake model with celeb faces from target video as src, and your face as dst

3) merge celeb face onto your face with raw-rgb mode

4) compose masked mouth with target video in AfterEffects

SAEHD:

now has 3 options: Encoder dimensions, Decoder dimensions, Decoder mask dimensions

现在有三个选项:Encoder dimensions, Decoder dimensions, Decoder mask dimensions

now has 4 arhis: dfhd (default), liaehd, df, liae

现在有四个结构:dfhd (default), liaehd, df, liae

df and liae are from SAE model, but use features from SAEHD model (such as combined loss and disable random warp)

df和liae来至Sae模型,但是使用SAEHD模型中的特性(例如组合损失和禁用随机扭曲)

dfhd/liaehd – changed encoder/decoder architectures

dfhd/liaehd -修改编码器和解码器结构

decoder model is combined with mask decoder model

解码器模型与遮罩解码模型结合

mask training is combined with face training,

遮罩训练和脸部训练相结合

result is reduced time per iteration and decreased vram usage by optimizer

结果是减少了每次迭代的时间,并且减少了VRAM的使用

“Initialize CA weights” now works faster and integrated to “Initialize models” progress bar

现在初始化权重更快,并且集成到了初始化模型精度条中

removed optimizer_mode option

移除 optimizer_mode option 选项

added option ‘Place models and optimizer on GPU?’

添加 在GPU 上放置模型和优化器。

When you train on one GPU, by default model and optimizer weights are placed on GPU to accelerate the process.

当您在一个GPU上进行训练时,默认情况下,模型和优化器权重将放置在GPU上以加速该过程。

You can place they on CPU to free up extra VRAM, thus you can set larger model parameters.

您可以将它们放在CPU上以释放额外的VRAM,从而可以设置更大的模型参数。

This option is unavailable in MultiGPU mode.

此选项在MultiGPU模式下不可用。

pretraining now does not use rgb channel shuffling

预训练现在不支持RGB颜色偏移

pretraining now can be continued

预训练可以继续

when pre-training is disabled:

当预训练不可用时

1) iters and loss history are reset to 1

迭代和loss历史被重置成1

2) in df/dfhd archis, only the inter part of the encoder is reset (before encoder+inter)

thus the fake will train faster with a pretrained df model

在df dfhd 结构中,仅重置编码器的内部部分(在 encoder+inter 之前)

Merger ( renamed from Converter ):

合并(由原先的Converter改名而来)

now you can choose on which GPUs (or CPU) to process

你现在可以选择GPU或者CPU来处理

new hot key combinations to navigate and override frame’s configs

新的热键组合可导航和覆盖框架的配置

super resolution upscaler “RankSRGAN” is replaced by “FaceEnhancer”

超级分辨率方法“ RankSRGAN”被“ FaceEnhancer”取代

FAN-x mask mode now works on GPU while merging (before on CPU),

FAN-x遮罩模式现在可以在合并时(在CPU之前)在GPU上工作

therefore all models (Main face model + FAN-x + FaceEnhancer)

因此,所有模型(主脸模型+ FAN-x + FaceEnhancer)

now work on GPU while merging, and work properly even on 2GB GPU.

现在可以在合并时在GPU上工作,即使在2GB GPU上也可以正常工作。

Quick96:

now automatically uses pretrained model

默认使用预训练模型

Sorter:

排序

removed all sort by *.bat files except one sort.bat

一出所有bat文件,仅保留sort.bat

now you have to choose sort method in the dialog

现在你可以通过选项来选择排序方法。

Other:

其他

all console dialogs are now more convenient

所有控制台对话都更加方便

new default example video files data_src/data_dst for newbies ( Robert Downey Jr. on Elon Musk )

新的演示视频换成了钢铁侠和马斯克

XnViewMP is updated to 0.94.1 version

XnViewMP升级到0.94.1

ffmpeg is updated to 4.2.1 version

ffmpeg 升级到4.2.1

ffmpeg: video codec is changed to x265

ffmpeg 视频编码改为x265

_internal/vscode.bat starts VSCode IDE where you can view and edit DeepFaceLab source code.

vscode.bat启动vscode IDE 你可以咋其中查看和编辑DeepFaceLab源码。

removed russian/english manual. Read community manuals and tutorials here

移出俄语和英语手册,教程可以看:

https://mrdeepfakes.com/forums/forum-guides-and-tutorials

new github page design

github页面做了重新设计

2020年1月11日

fix freeze on sample loading

修复加载样例卡主的问题

2020年1月8日

fixes and optimizations in sample generators

修复和优化样例生成

fixed Quick96 and removed lr_dropout from SAEHD for OpenCL build.

修复Quick96,SAEHD模型针对Opencl版本移除了lr_dropout的参数。

CUDA build now works on lower-end GPU with 2GB VRAM:

CUDA版本现在可以运行在低显存(2G+)设备

GTX 880M GTX 870M GTX 860M GTX 780M GTX 770M

GTX 765M GTX 760M GTX 680MX GTX 680M GTX 675MX GTX 670MX

GTX 660M GT 755M GT 750M GT 650M GT 745M GT 645M GT 740M

GT 730M GT 640M GT 735M GT 730M GTX 770 GTX 760 GTX 750 Ti

GTX 750 GTX 690 GTX 680 GTX 670 GTX 660 Ti GTX 660 GTX 650 Ti GTX 650 GT 740